Google’s Robots.txt Docs Expand, Deep Links Get Rules, EU Steps In – SEO Pulse via @sejournal, @MattGSouthern

Welcome to the week’s Pulse: updates affect how deep links appear in your snippets, how your robots.txt gets parsed, how agentic features work in Search, and how the EU’s data-sharing rules apply to AI chatbots.

Here’s what matters for you and your work.

Google Lists Best Practices For Read More Deep Links

Google updated its snippet documentation with a new section on “Read more” deep links in Search results. The documentation lists three best practices that can increase the likelihood of these links appearing.

Key facts: Content must be immediately visible to a human on page load, and content hidden behind expandable sections or tabbed interfaces can reduce the likelihood of these links appearing. Sections should use H2 or H3 headings. The snippet text needs to match the content that appears on the page, and pages with content loaded after scrolling or interaction may further reduce the likelihood.

Why This Matters

The three practices are the first specific guidance Google has published on this feature. Sites using expandable FAQ sections, tabbed product detail areas, or scroll-triggered content for core information may see fewer deep links in their snippets compared with sites that render the same content on page load.

The guidance matches a pattern Google has applied to other Search features. Content that renders without user interaction is more likely to appear in enhanced display.

Slobodan Manić, founder of No Hacks, made a related observation on LinkedIn:

“The documentation is framed around one snippet behavior (read more deep links in search results), but the language Google chose reads as a general preference. ‘Content immediately visible to a human’ is the structural instruction, not a read-more-specific tip.”

Manić’s point extends his April 16 IMHO interview with Managing Editor Shelley Walsh, where he argued that most websites are structurally broken for AI agents. He argues that search crawlers and AI agents now face the same structural problem, and the audit is the same for both.

For existing pages, the audit question is whether key information is contained within a click-to-expand element. If a page already has a “Read more” deep link for one section, that section’s structure serves as a guide to what works. For other sections on the same page, replicating that structure may also improve their chances.

Google describes the guidance as best practices that can “increase the likelihood” of deep links appearing. That hedging matters because this is not a list of requirements, and following all three may not guarantee the links appear.

Read our full coverage: Google Lists Best Practices For Read More Deep Links

Google May Expand Its Robots.txt Unsupported Rules List

Google may add rules to its robots.txt documentation based on analysis of real-world data collected through HTTP Archive. Gary Illyes and Martin Splitt described the project on the latest Search Off the Record podcast.

Key facts: Google’s team analyzed the most frequently unsupported rules in robots.txt files across millions of URLs indexed by the HTTP Archive. Illyes said the team plans to document the top 10 to 15 most-used unsupported rules beyond user-agent, allow, disallow, and sitemap. He also said the parser may expand the typos it accepts for disallow, though he did not commit to a timeline or name specific typos.

Why This Matters

If Google documents more unsupported directives, sites using custom or third-party rules will have clearer guidance on what Google ignores.

Anyone maintaining a robots.txt file with rules beyond user-agent, allow, disallow, and sitemap should audit for directives that have never worked for Google. The HTTP Archive data is publicly queryable on BigQuery, so the same distribution Google used is available to anyone who wants to examine it.

The typo tolerance is the more speculative part. Illyes’ phrasing implies that the parser already accepts some misspellings of “disallow,” and more may be honored over time. Audit any spelling variants now and correct them, rather than assuming they will be ignored.

Read our full coverage: Google May Expand Unsupported Robots.txt Rules List

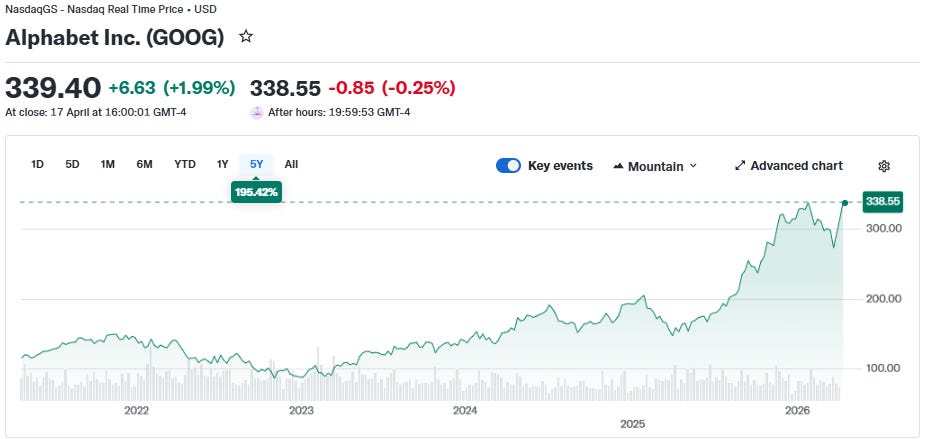

EU Proposes Google Share Search Data With Rivals And AI Chatbots

The European Commission sent preliminary findings proposing that Google share search data with rival search engines across the EU and EEA, including AI chatbots that qualify as online search engines under the DMA. The measures are not yet binding, with a public consultation open until May 1 and a final decision due by July 27.

Key facts: The proposal covers four data categories shared on fair, reasonable, and non-discriminatory terms. The categories are ranking, query, click, and view data. Eligibility extends to AI chatbot providers that meet the DMA’s definition of online search engines. If the Commission maintains eligibility through the final decision, qualifying providers could gain access to anonymized Google Search data under the Commission’s proposed terms.

Why This Matters

This proposal explicitly extends search-engine data-sharing eligibility to AI chatbots under the DMA. If the eligibility survives the consultation, the regulatory category of “search engine” now includes products that most search marketing work has treated as a separate category.

The consequences vary depending on where you operate. For sites optimizing for EU/EEA visibility, the change could broaden the scope of where anonymized search signals flow. AI products competing with Google in that market could use the data to improve their retrieval and ranking systems, which could, in turn, affect which content they cite.

Outside the EU, the direct regulatory effect is zero. The category definition is a different matter. How the Commission draws the line between “AI chatbot” and “AI chatbot that qualifies as a search engine” is likely to be cited in future proceedings.

The eligibility question is the story to watch through May 1. If the Commission narrows the AI chatbot criteria in response to consultation feedback, the implications stay regulatory. If it holds the line, that would set a material precedent for how AI search is classified.

Read our full coverage: Google May Have To Share Search Data With Rivals

Google Adds New Task-Based Search Features

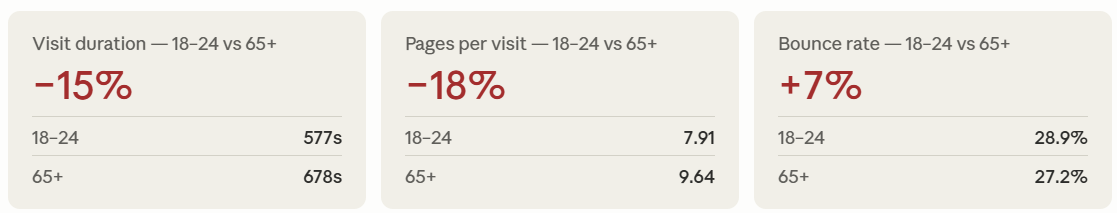

Google introduced new Search features that continue its evolution toward task completion. Users can now track individual hotel price drops via a new toggle in Search, and Google is adding the ability to launch AI agents directly from AI Mode.

Key facts: Hotel price tracking is available globally through a toggle in the search bar. When prices drop for a tracked hotel, Google sends an email alert. The AI agent launched from AI Mode allows users to initiate tasks handled by AI within the search interface. Rose Yao, a Google Search product leader, posted about the features on X.

Why This Matters

Each task-based feature moves a process that previously started on another site into Google’s own surface. Hotel price tracking has existed at the city level for months. Expansion to individual hotels adds a new signal that users can set inside Google rather than on hotel or aggregator sites.

Direct-booking visibility depends on being inside Google’s ecosystem. Sites relying on price-drop alerts as a return-trigger for users may see some of that engagement reallocated to Google’s tracking UI. For hotel brands, this raises the stakes for ensuring individual hotel pages are fully populated in Google Business Profile and hotel feeds.

On LinkedIn, Daniel Foley Carter connected the feature to a broader pattern:

“Google’s AI overviews, AI mode and now in-frame functionality for SERP + SITE is just Google eating more and more into traffic opportunities. Everything Google told US not to do its doing itself. SPAM / LOW VALUE CONTENT – don’t resummarise other peoples content – Google does it.”

The AI agent launch is more speculative. Google has not published detailed documentation explaining what kinds of tasks users can delegate or how sources get cited. The feature confirms that agentic search, described by Sundar Pichai as “search as an agent manager,” is appearing incrementally in Search rather than as a single launch.

Read Roger Montti’s full coverage: Google Adds New Tasked-Based Search Features

Theme Of The Week: The Rules Are Getting Written

Each story this week spells out something that was previously implicit or underway.

Google signaled plans to expand what its robots.txt documentation covers. The company listed specific practices that can increase the likelihood of “Read more” deep links appearing. The European Commission proposed measures that extend search-engine data-sharing eligibility to AI chatbots under the DMA. And task-based features that Sundar Pichai described in interviews are rolling out as toggles in the search bar.

For your day-to-day, the ground gets firmer. Fewer questions are judgment calls. What does and doesn’t qualify, what Google supports, and what counts as a search engine to a regulator are all getting written down. That works to your advantage when it means clearer audit criteria, and against you when “we weren’t sure” is no longer a defensible answer.

Top Stories Of The Week:

More Resources:

Featured Image: [Photographer]/Shutterstock

https://www.searchenginejournal.com/seo-pulse-googles-robots-txt-docs-expand-deep-links-get-rules-eu-steps-in/572877/