Condé Nast user database reportedly breached, Ars unaffected

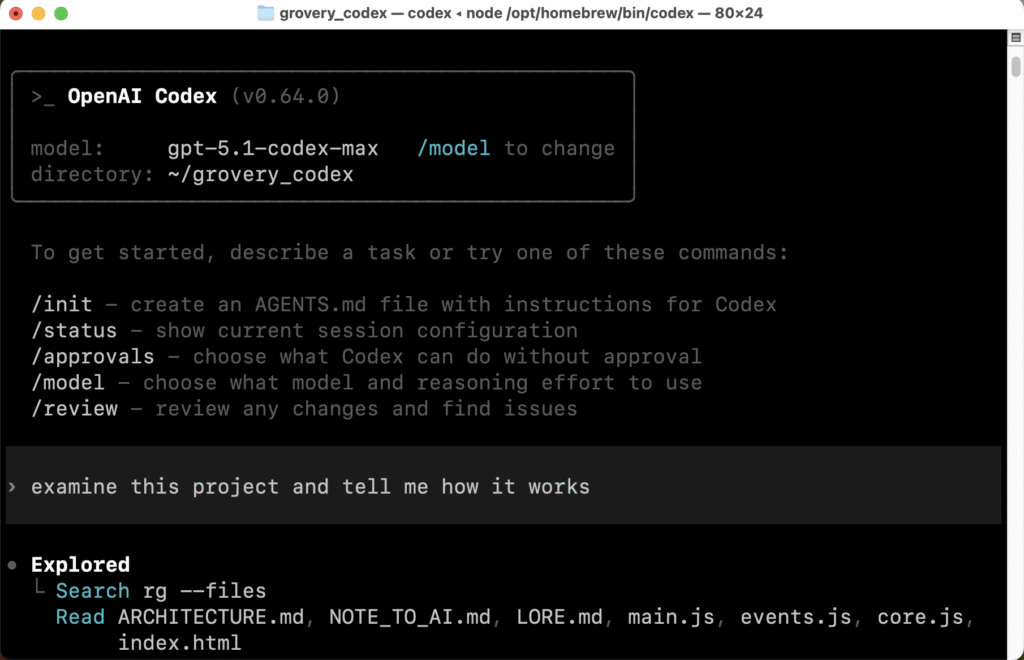

Earlier this month, a hacker named Lovely claimed to have breached a Condé Nast user database and released a list of more than 2.3 million user records from our sister publication WIRED. The released materials contain demographic information (name, email, address, phone, etc.) but no passwords.

The hacker also says that they will release an additional 40 million records for other Condé Nast properties, including our other sister publications Vogue, The New Yorker, Vanity Fair, and more. Of critical note to our readers, Ars Technica was not affected as we run on our own bespoke tech stack.

The hacker said that they had urged Condé Nast to patch vulnerabilities to no avail. “Condé Nast does not care about the security of their users data,” the hacker wrote. “It took us an entire month to convince them to fix the vulnerabilities on their websites. We will leak more of their users’ data (40+ million) over the next few weeks. Enjoy!”

It’s unclear how altruistic the motive really was. DataBreaches.Net says that Lovely misled the site into believing that the hacker was trying to help patch vulnerabilities, when in reality, it appears that the hacker is a “cybercriminal” looking for a payout. “As for ‘Lovely,’ they played me. Condé Nast should never pay them a dime, and no one else should ever, as their word clearly cannot be trusted,” wrote DataBreaches.Net.

Condé Nast has not issued a statement, and we have not been informed internally of the hack (which is not surprising, since Ars is not affected).

Hudson Rock’s InfoStealers has an excellent rundown of what has been exposed.

https://arstechnica.com/information-technology/2025/12/conde-nast-user-database-reportedly-breached-ars-unaffected/