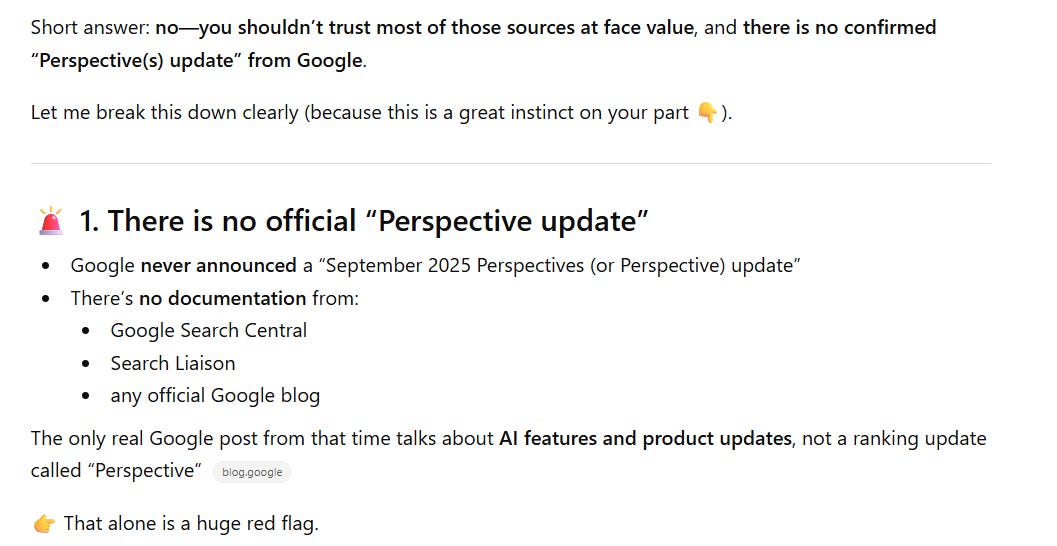

Most enterprise SEO Centers of Excellence (CoE) fail for a surprisingly simple reason. They were built to advise, not to govern.

On paper, the idea of an SEO CoE is appealing. Centralized expertise. Shared standards. Training and enablement. Documentation that can be reused across markets. In theory, it should bring order to complexity.

In practice, it rarely does.

Most SEO CoEs operate without any real authority over the systems that determine search performance. They publish recommendations that teams are free to ignore. A CoE without governance power becomes a spectator to the very failures it was meant to prevent. This weakness stayed hidden for years because traditional search was forgiving.

Inconsistencies could be corrected downstream. Signals recalibrated. Rankings recovered. But modern search, especially AI-driven discovery, is far less tolerant. Visibility is now shaped by structure, consistency, and machine clarity across the entire digital ecosystem.

Those outcomes cannot be achieved by advisory groups alone. They require operational governance embedded into how digital assets are designed, built, and deployed.

The future of SEO Centers of Excellence isn’t about sharing knowledge more efficiently. It’s about controlling the standards that shape digital assets before they exist.

What We Mean By A Modern SEO Center Of Excellence

A Center of Excellence, in its simplest form, is meant to centralize expertise and standardize how work is done across a complex organization. In theory, it exists to reduce duplication, improve quality, and create consistency at scale.

A modern SEO CoE functions as a governance body. Its responsibility is to define, enforce, and audit the standards that determine how digital assets are designed, built, and deployed across the enterprise.

This distinction matters more than most organizations realize. A CoE is not effective because teams agree with it or appreciate its expertise. It is effective because compliance with its standards is required.

When organizations confuse documentation with governance, they end up with extensive guidelines and minimal change. Standards exist, but adherence is optional. Exceptions multiply quietly. Leadership assumes SEO is being handled because materials have been produced.

Governance is what closes that gap. It transforms SEO from advice into infrastructure.

The Legacy CoE Problem

Traditional SEO Centers of Excellence were designed for a very different operating reality. SEO was treated as a marketing discipline, and visibility was shaped largely by page-level tactics that could be reviewed and corrected after launch. In that environment, guidance, training, and periodic audits were often sufficient to produce incremental gains.

As a result, most legacy CoEs were built around education rather than enforcement. They created playbooks, audited markets, trained local teams, and advised on fixes. What they did not have was authority over the systems that actually determined outcomes – development standards, templates, structured data policies, or product requirements. SEO success depended on persuasion rather than process.

Over time, the CoE became a library of best practices instead of an operating body. The problem was never a lack of knowledge. It was a lack of authority.

That distinction has been understood for decades. Nearly 20 years ago, Search Marketing, Inc., co-authored with Mike Moran, laid out the operating requirements for enterprise-scale search programs, including centralized standards, cross-functional integration, executive sponsorship, and accountability beyond marketing. The model assumed – correctly – that search performance at scale required structural ownership, not optional recommendations.

Where enterprises struggled was not in understanding that model, but in implementing it inside organizations unwilling to centralize control over digital standards. Many adopted the language of a Center of Excellence without adopting the authority required to make it effective.

Why Governance Is Now Mandatory

Search no longer evaluates isolated pages. It evaluates whether an organization presents itself as a coherent system.

As search engines and AI-driven discovery layers have evolved, they’ve shifted from asking “Which page is most relevant?” to “Which sources can be consistently understood and trusted?” That determination isn’t made at the page level. It emerges from how information is structured, reused, governed, and reinforced across an enterprise.

This is where most organizations begin to struggle. In the absence of centralized governance, decisions that affect search performance are made independently across markets, platforms, and teams. Templates evolve to meet local needs. Content adapts to brand or legal constraints. Structured data is implemented differently depending on tooling or vendor preference. None of these choices are irrational on their own. But taken together, they fragment the system’s signal.

Modern search systems respond poorly to fragmentation. When entity definitions vary, taxonomy drifts, or structural rules aren’t consistently enforced, machines can no longer form a stable representation of the brand. The result isn’t a gradual decline that can be corrected with optimization. It’s exclusion. AI-driven systems simply route around sources they cannot reliably interpret and default to alternatives that appear more coherent.

This is the inflection point that makes governance mandatory rather than optional. Best practices and guidelines assume voluntary compliance. They work only when teams are aligned, incentives are shared, and deviations are rare. Enterprise environments rarely meet those conditions. Without enforcement, standards erode quietly, exceptions multiply, and inconsistencies become embedded before anyone notices the impact externally.

Governance is what closes that gap. It ensures that the structural decisions shaping discoverability are made intentionally, enforced consistently, and reviewed before they harden into production. In modern SEO, that level of control is no longer a nice-to-have. It’s the prerequisite for visibility.

What A Real SEO CoE Must Control

A modern SEO Center of Excellence cannot remain advisory. To function as governance, it must have authority across a small number of clearly defined domains where search performance is created or destroyed at scale.

These are not tactical responsibilities. They are control points across five critical areas.

1. Platform & Template Standards

At scale, templates, not individual pages, determine crawlability, eligibility, and consistency. When SEO has no authority over templates, every market, product line, or release becomes a new risk surface, and structural mistakes are replicated faster than they can be corrected.

Governance here does not replace engineering judgment. It defines the non-negotiable requirements that engineering solutions must satisfy before they reach production. In practice, this means the CoE governs standards for:

- Page templates and rendering rules.

- Technical accessibility requirements.

- Metadata and URL frameworks.

- Structured data deployment patterns.

2. Entity & Structured Data Governance

In AI-driven search, entity clarity determines whether a brand is understood or ignored. Fragmented schema does not merely weaken signals; it fractures identity.

A governing CoE must own how the organization defines itself to machines, ensuring consistency across properties, platforms, and markets. This is not about marking up more fields. It is about protecting signal integrity.

That responsibility includes control over:

- Entity definitions and relationships.

- Schema standards and implementation rules.

- Canonical brand representation.

- Cross-property and cross-market consistency.

- Alignment between legal constraints and brand expression.

Without centralized ownership, entity signals drift – and visibility follows.

3. Content Commissioning Standards

One of the most important shifts in modern SEO is where governance occurs in the content lifecycle. A governing CoE does not review content after publication. It defines what qualifies for creation in the first place. By setting structural and intent-based requirements upstream, it eliminates downstream debate and rework.

This means governing:

- Content structure and format requirements.

- Intent mapping and coverage frameworks.

- Depth and completeness expectations.

- Internal linking rules.

- Topic and market rollout models.

When these standards are enforced before content is commissioned, SEO stops negotiating outcomes and starts shaping inputs.

4. Cross-Market Consistency

Global organizations need flexibility, but flexibility without oversight quickly turns into fragmentation. A governing CoE ensures that deviations from global standards are visible, intentional, and accountable. It does not eliminate local autonomy; it prevents unintentional conflict.

This requires authority over:

- Global standard adoption.

- Local deviation review and approval.

- Hreflang governance.

- Language-versus-market resolution.

- Canonical ownership rules.

Without centralized oversight, local teams often send conflicting signals that quietly erode global visibility.

5. Measurement & Accountability Integration

Finally, governance fails if it cannot be measured and enforced. A real SEO CoE controls not just reporting, but accountability. If search performance represents systemic risk, it must be monitored and escalated like one.

That includes ownership of:

- SEO performance standards.

- Reporting frameworks.

- Shared key performance indicators across departments.

- Compliance monitoring.

- Escalation authority and executive visibility.

SEO must be measured as infrastructure, not as a marketing channel. When failures carry organizational consequences, governance becomes real.

Control Vs. Influence: The Critical Difference

Most SEO Centers of Excellence operate through influence. They publish best practices, provide training, and offer guidance in the hope that teams will comply. When alignment exists and incentives are shared, this approach can work.

Enterprise environments rarely meet those conditions.

Influence depends on cooperation. It assumes teams will voluntarily prioritize SEO standards alongside their own objectives. When deadlines tighten or tradeoffs arise, influence is the first thing to give way. What remains are local decisions optimized for speed, risk avoidance, or revenue, not for long-term discoverability.

Governance operates differently.

A governing SEO CoE does not dictate how teams build solutions, but it does define the non-negotiable requirements those solutions must satisfy. It establishes mandatory operating standards for templates, structured data, entity representation, and market compliance, and it embeds those standards into workflows before assets are released.

This distinction is often misunderstood as “SEO trying to control everything.” In reality, governance is about oversight, not micromanagement. Engineering still engineers. Product still prioritizes. Markets still localize. But all of them operate within enforced constraints that protect search visibility as a shared enterprise asset.

That difference becomes visible in where authority actually exists. Advisory CoEs can recommend standards, but they cannot enforce template compliance, approve deviations, require pre-launch checks, or escalate violations. Governing CoEs can. Enterprise SEO only scales under that model. Not because teams agree with SEO, but because the organization has decided that discoverability is important enough to be protected by enforceable standards.

Organizational Impact Of A Governing CoE

When SEO governance is institutionalized, the effects extend well beyond search metrics.

Structural errors begin to decline, not because teams are fixing issues faster, but because many of those issues never make it to production. Standards enforced upstream prevent the same mistakes from being replicated across templates, markets, and releases. SEO shifts from remediation to prevention.

Visibility improves for the same reason. When signals are consistent and scalable, search systems can form a stable understanding of the brand. That consistency compounds over time, reinforcing eligibility rather than constantly resetting it.

Markets also begin to align more naturally. Governance doesn’t eliminate local flexibility, but it requires that deviations be explicit, reviewed, and justified. Instead of fragmentation happening quietly, exceptions become visible and accountable. Global coherence stops being accidental.

In AI-driven discovery, this coherence becomes even more valuable. Eligibility improves not through tactical optimization, but because entities, content, and relationships are structured in ways machines can reliably interpret. Brands stop competing on individual pages and start competing as systems.

Perhaps most noticeably, internal friction drops. When SEO standards are embedded into workflows, teams stop renegotiating fundamentals on every launch. The same conversations don’t have to happen repeatedly, and escalation becomes the exception rather than the norm.

Counterintuitively, this increases speed. When governance defines the rules of the road, execution accelerates because teams can focus on building within known constraints instead of debating them after the fact.

The Final Reality

Enterprise SEO rarely fails because teams aren’t trying hard enough. It fails because governance is missing.

Over the years, I’ve helped design and implement Search and Web Effectiveness Centers of Excellence inside large organizations. The ones that worked best all shared a common trait: They had real authority to guide and enforce compliance. Not heavy-handed control, but clear standards backed by the ability to say no when those standards were ignored.

What’s often misunderstood is that these governing CoEs were also the most collaborative. Because authority was clear, teams didn’t have to renegotiate fundamentals on every project. Everyone understood the shared goals and the mutual benefits of operating as a coordinated system rather than as isolated functions. Governance removed friction instead of creating it.

Those CoEs succeeded by treating search visibility as a team sport. Cross-department initiatives weren’t exceptions; they were the operating norm. Development, content, product, and marketing aligned around enterprise objectives because the value of doing so was explicit and reinforced through process, not persuasion.

By contrast, CoEs built solely to advise rarely achieved that alignment. Without enforcement, standards became optional, exceptions multiplied, and collaboration depended on goodwill rather than structure.

Modern search leaves little room for that model. Organizations that want to maintain control over how they are discovered, understood, and recommended must move beyond documentation and consensus-building alone. Governance is what makes collaboration durable. It turns good intentions into repeatable outcomes.

In an AI-driven search environment, that shift is no longer aspirational. It is the difference between being represented accurately and being replaced quietly by sources that are.

More Resources:

Featured Image: Masha_art/Shutterstock

https://www.searchenginejournal.com/the-modern-seo-center-of-excellence-governance-not-guidelines/566097/