On Tuesday, OpenAI announced that it is experimenting with adding a form of long-term memory to ChatGPT that will allow it to remember details between conversations. You can ask ChatGPT to remember something, see what it remembers, and ask it to forget. Currently, it’s only available to a small number of ChatGPT users for testing.

So far, large language models have typically used two types of memory: one baked into the AI model during the training process (before deployment) and an in-context memory (the conversation history) that persists for the duration of your session. Usually, ChatGPT forgets what you have told it during a conversation once you start a new session.

Various projects have experimented with giving LLMs a memory that persists beyond a context window. (The context window is the hard limit on the number of tokens the LLM can process at once.) The techniques include dynamically managing context history, compressing previous history through summarization, links to vector databases that store information externally, or simply periodically injecting information into a system prompt (the instructions ChatGPT receives at the beginning of every chat).

OpenAI hasn’t explained which technique it uses here, but the implementation reminds us of Custom Instructions, a feature OpenAI introduced in July 2023 that lets users add custom additions to the ChatGPT system prompt to change its behavior.

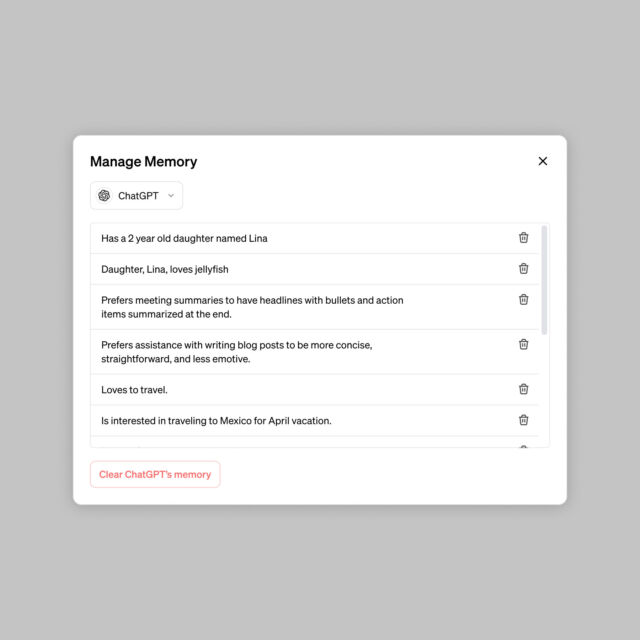

Possible applications for the memory feature provided by OpenAI include explaining how you prefer your meeting notes to be formatted, telling it you run a coffee shop and having ChatGPT assume that’s what you’re talking about, keeping information about your toddler that loves jellyfish so it can generate relevant graphics, and remembering preferences for kindergarten lesson plan designs.

Also, OpenAI says that memories may help ChatGPT Enterprise and Team subscribers work together better since shared team memories could remember specific document formatting preferences or which programming frameworks your team uses. And OpenAI plans to bring memories to GPTs soon, with each GPT having its own siloed memory capabilities.

Memory control

Obviously, any tendency to remember information brings privacy implications. You should already know that sending information to OpenAI for processing on remote servers introduces the possibility of privacy leaks and that OpenAI trains AI models on user-provided information by default unless conversation history is disabled or you’re using an Enterprise or Team account.

Along those lines, OpenAI says that your saved memories are also subject to OpenAI training use unless you meet the criteria listed above. Still, the memory feature can be turned off completely. Additionally, the company says, “We’re taking steps to assess and mitigate biases, and steer ChatGPT away from proactively remembering sensitive information, like your health details—unless you explicitly ask it to.”

Users will also be able to control what ChatGPT remembers using a “Manage Memory” interface that lists memory items. “ChatGPT’s memories evolve with your interactions and aren’t linked to specific conversations,” OpenAI says. “Deleting a chat doesn’t erase its memories; you must delete the memory itself.”

ChatGPT’s memory features are not currently available to every ChatGPT account, so we have not experimented with it yet. Access during this testing period appears to be random among ChatGPT (free and paid) accounts for now. “We are rolling out to a small portion of ChatGPT free and Plus users this week to learn how useful it is,” OpenAI writes. “We will share plans for broader roll out soon.”

https://arstechnica.com/?p=2003078