For now, the lab version has an anemic field of view — just 11.7 degrees in the lab, far smaller than a Magic Leap 2 or even a Microsoft HoloLens.

But Stanford’s Computational Imaging Lab has an entire page with visual aid after visual aid that suggests it could be onto something special: a thinner stack of holographic components that could nearly fit into standard glasses frames, and be trained to project realistic, full-color, moving 3D images that appear at varying depths.

Like other AR eyeglasses, they use waveguides, which are a component that guides light through glasses and into the wearer’s eyes. But researchers say they’ve developed a unique “nanophotonic metasurface waveguide” that can “eliminate the need for bulky collimation optics,” and a “learned physical waveguide model” that uses AI algorithms to drastically improve image quality. The study says the models “are automatically calibrated using camera feedback”.

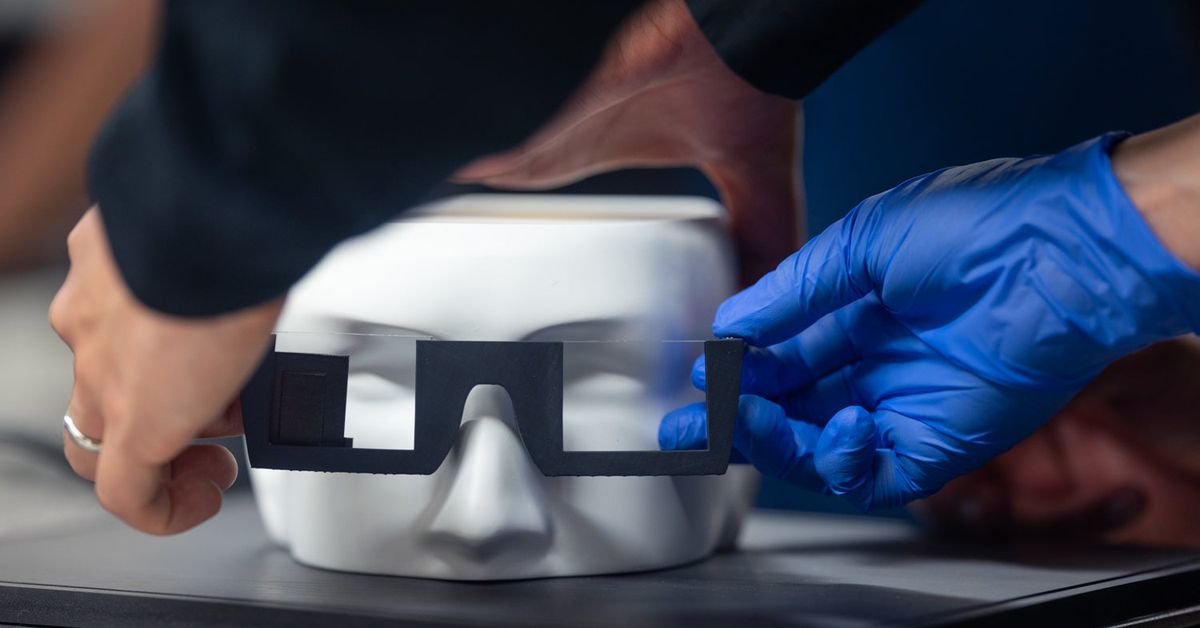

Although the Stanford tech is currently just a prototype, with working models that appear to be attached to a bench and 3D-printed frames, the researchers are looking to disrupt the current spatial computing market that also includes bulky passthrough mixed reality headsets like Apple’s Vision Pro, Meta’s Quest 3, and others.

Postdoctoral researcher Gun-Yeal Lee, who helped write the paper published in Nature, says there’s no other AR system that compares both in capability and compactness.

https://www.theverge.com/2024/5/9/24153092/stanford-ai-holographic-ar-glasses-3d-imaging-research